X is tightening the screws on AI-driven misinformation, announcing that creators who upload AI-generated videos depicting armed conflict without clear disclosure will lose access to the platform’s ad revenue sharing. Offending accounts face a three-month suspension from payouts, with repeat violations leading to permanent removal from the program.

What the policy says about unlabeled AI war content on X

According to the company’s head of product, the rule targets users who exploit generative AI to make battlefield scenes or war footage appear real. If the content is not labeled as AI-made, X will suspend the creator from its revenue-sharing program for 90 days. Post-suspension, anyone who continues to share misleading, unlabeled AI war content risks being permanently cut off from payouts.

- What the policy says about unlabeled AI war content on X

- Why it matters for AI-generated war content online

- Enforcement challenges and gray areas in AI war posts

- Economic incentives at stake in X’s creator payouts

- How it compares to rival platforms’ AI disclosure rules

- What creators should do now to stay eligible for payouts

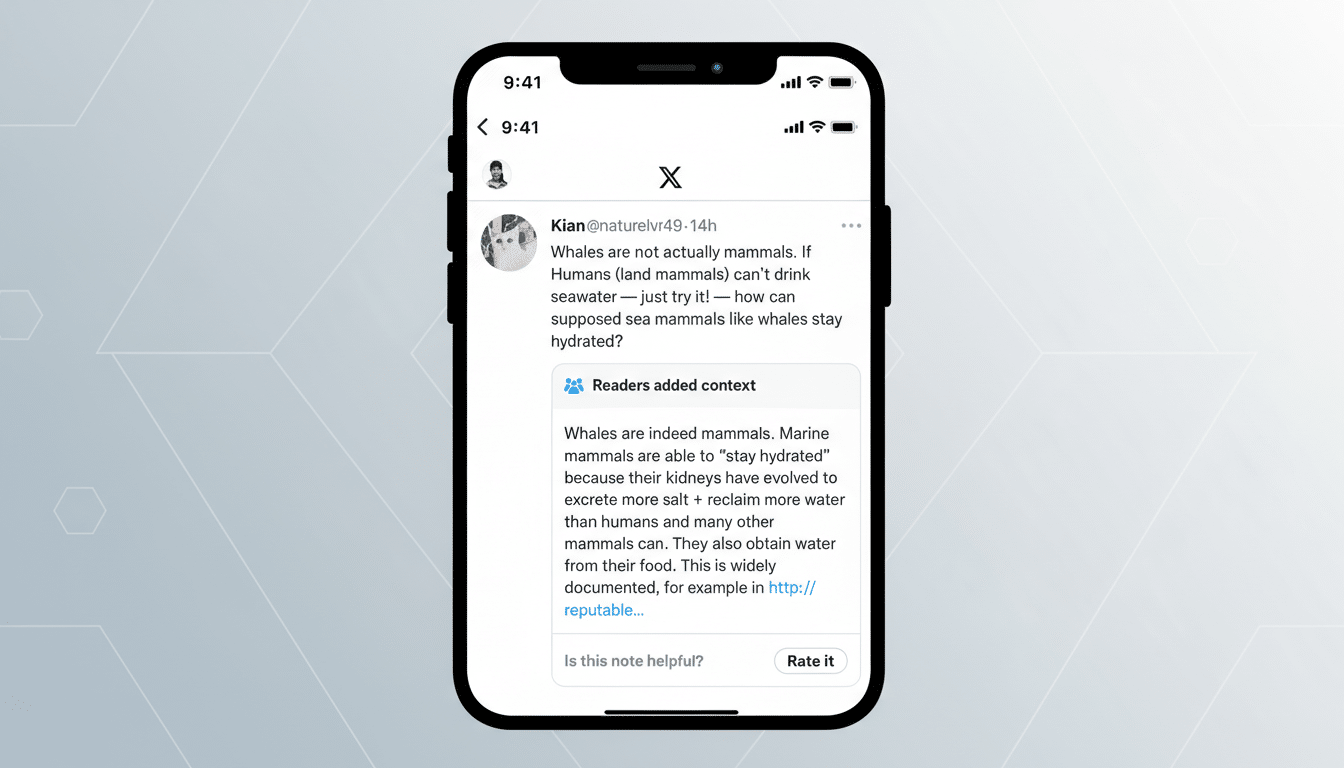

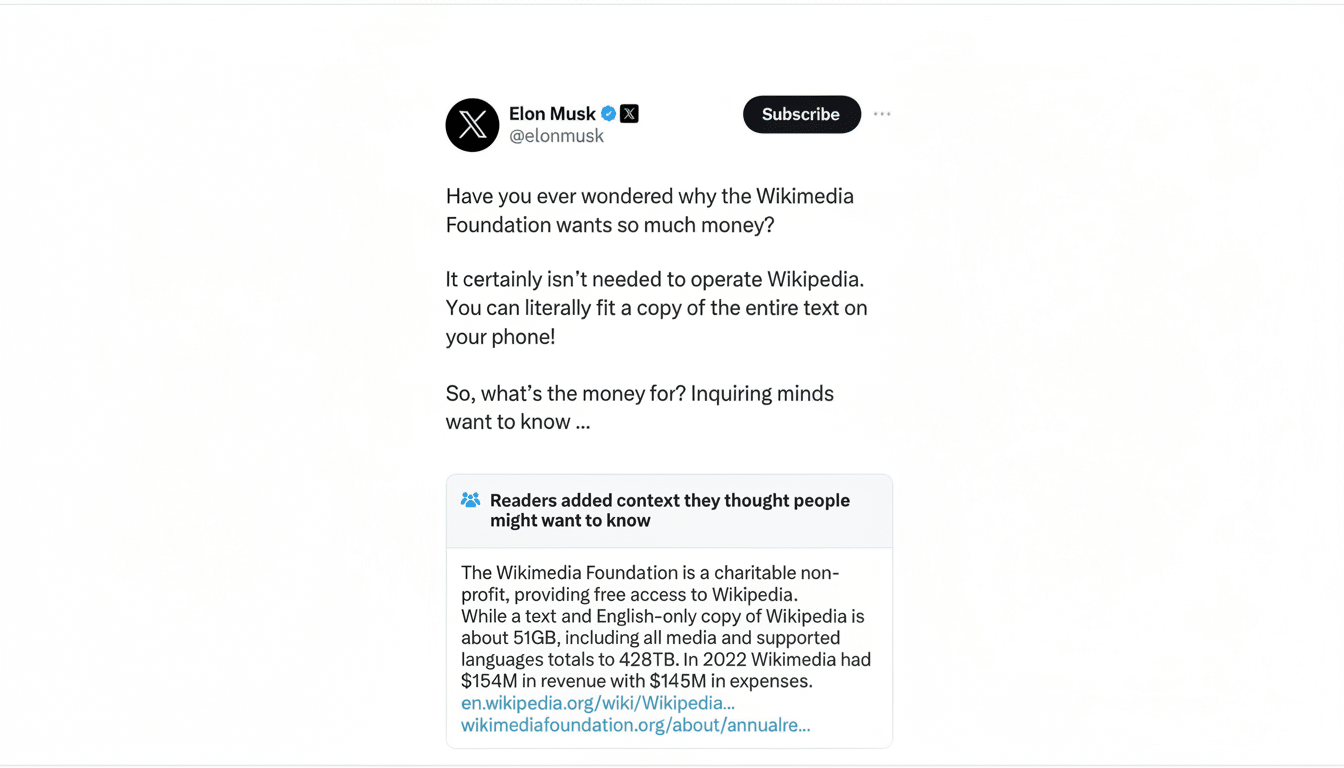

X says it will flag violations using a mix of internal AI-detection tools and its crowdsourced fact-checking feature, Community Notes, which attaches context to posts when contributors reach consensus. The company framed the move as an authenticity safeguard during armed conflicts, when misleading visuals can spread quickly and influence public perception.

Why it matters for AI-generated war content online

Generative AI has slashed the cost and time needed to fabricate convincing war footage, making old verification playbooks less reliable. Researchers and open-source investigators have already documented waves of synthetic images and videos purporting to show combat, refugees, or atrocities, which then get amplified by engagement-driven feeds.

Public anxiety is high. The Reuters Institute has reported that more than half of news consumers worry about distinguishing real from fake content online, with concerns especially acute around war and politics. In that environment, even brief exposure to a convincing fake can distort narratives, overwhelm fact-checkers, and put journalists and civilians at risk.

Enforcement challenges and gray areas in AI war posts

The hardest part will be drawing lines. What qualifies as “AI-generated” when creators blend real footage with synthetic overlays or use AI to upscale, colorize, or alter historical material? Does stylized or satirical content require the same labeling as photorealistic hoaxes? X has not detailed how granular its detection will be or what form disclosure must take.

Technical detection remains imperfect. Watermarks and provenance standards such as C2PA can help, but not all models embed signals, and many indicators can be stripped or compressed away. Community Notes adds a valuable layer of collective intelligence, yet it relies on contributor availability, language coverage, and sufficient agreement to publish a note before a misleading clip goes viral.

Economic incentives at stake in X’s creator payouts

X’s Creator Revenue Sharing Program pays eligible posters a share of ad revenue tied to post engagement. Participation typically requires an active paid subscription, compliance with platform rules, and audience thresholds. Supporters say it rewards originality; critics argue it can nudge creators toward outrage bait and sensationalism because more reactions mean more money.

By targeting payments rather than the posts themselves, X is trying to choke off the financial upside for synthetic war footage without broadly expanding takedowns. The bet is behavioral: remove the monetary reward, and you reduce the volume of misleading conflict content. However, the policy is narrow. AI-made political misinformation, celebrity deepfakes, and deceptive product pitches still fall outside the specific “armed conflict” scope, unless they trigger other rules.

How it compares to rival platforms’ AI disclosure rules

Major platforms are converging on disclosure, but with different levers. YouTube requires creators to label realistic synthetic media and can penalize deceptive manipulation under its misinformation and spam policies. Meta has moved to label AI-generated images at scale using industry metadata signals, while also removing manipulated media that could mislead about real-world events in certain contexts. TikTok mandates AI labels and has rolled out provenance tags through partnerships with content authenticity initiatives.

X’s approach is distinctive in its focus on monetization: creators can post labeled AI conflict content and remain eligible, but concealment risks losing payouts. That distinction might encourage responsible labeling without turning the platform into an AI-free zone.

What creators should do now to stay eligible for payouts

Label any synthetic or heavily AI-altered war footage clearly in the post text and, ideally, on-screen. Keep provenance data intact by exporting with metadata where possible and consider adopting content authenticity standards that embed creation details. Provide context if mixing real and AI elements, and avoid photorealistic depictions of current conflicts that could plausibly be misread as authentic reporting.

Monitor Community Notes on your posts and be prepared to update labels if contributors add context. X has not published a formal appeals flow specific to these suspensions, but creators should document their disclosures and edits in case disputes arise. The practical takeaway is simple: transparency preserves both audience trust and access to payouts.