The Supreme Court has declined to review a challenge to the U.S. Copyright Office’s refusal to register an AI-generated artwork, leaving in place decisions that require human authorship for copyright protection and cementing, for now, the boundaries around machine-made creativity.

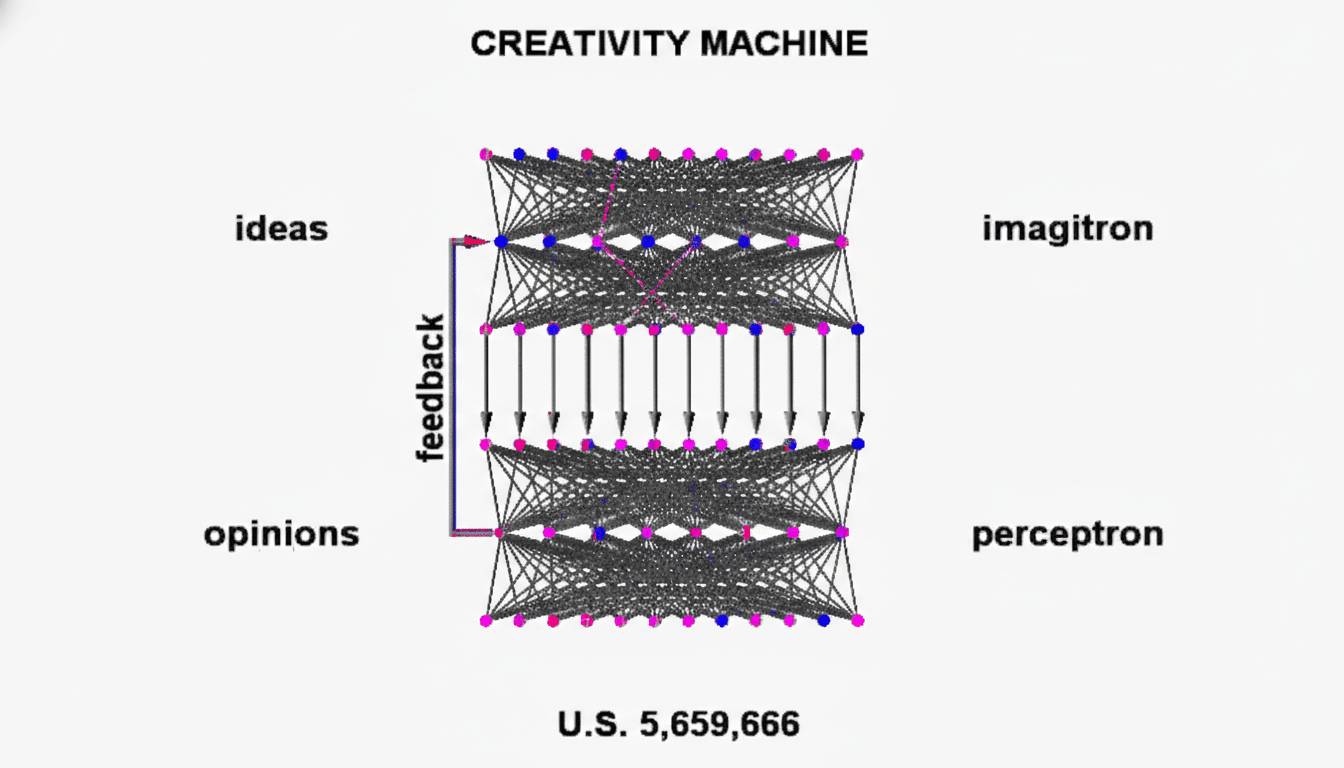

The petition stemmed from computer scientist Stephen Thaler’s attempt to register a piece created by his AI system, often described as the “Creativity Machine.” Examiners at the Copyright Office rejected the application on the ground that the image lacked human authorship, a position a federal district court later upheld. By turning away the case, the Supreme Court leaves that framework intact without weighing in on the merits.

Practically, the decision keeps the Copyright Office’s current approach in force: unedited outputs from generative models are not protected, while works that integrate AI tools but still reflect the “centrality of human creativity” can qualify. That distinction, outlined in the agency’s policy statements and a subsequent report, now remains the operative rule for artists, publishers, and platforms.

What the Court Did and Why It Matters for Creators

By denying certiorari, the Court did not issue a new legal test; it simply left the lower-court ruling and the agency’s interpretation undisturbed. For creators seeking exclusive rights in purely algorithmic images, the status quo persists: no registration, no statutory damages, and fewer practical enforcement options.

The outcome aligns with a century of authorship jurisprudence. Courts have long tied copyright to human creative choices, a line of reasoning traceable to Burrow-Giles Lithographic Co. v. Sarony on photographs and reinforced by the Ninth Circuit’s “monkey selfie” decision, which underscored that nonhumans cannot hold copyrights. The same human-authorship anchor now shapes how agencies and courts view machine outputs.

How the Case Shaped U.S. Copyright Policy

Thaler’s dispute accelerated formal guidance. The Copyright Office issued registration instructions making clear that applicants must disclose and disclaim AI-generated content. That policy was soon tested in high-profile matters: for example, the Office partially canceled a registration for the comic book Zarya of the Dawn, finding the text protectable but not the Midjourney-generated images because the expressive elements were determined by the model rather than the author.

The agency also ran an extensive public inquiry on AI and copyright and reported receiving more than 10,000 comments from creators, tech companies, academics, and civil society. Its subsequent report drew the now-familiar line—unedited generative outputs are out, while AI-assisted works may be in if a human’s selection, arrangement, or modification contributes original expression.

What Counts as Protectable Human Creative Input

Prompts alone rarely suffice. The Office has said brief text prompts typically capture ideas and instructions, not the protectable expression that emerges from the model. What moves the needle are human contributions that materially shape the final work—substantive edits, iterative curation where the author’s judgment is evident, compositing, or original elements layered onto AI material.

For creators using AI tools, paper trails matter. Keep prompt histories, intermediate outputs, edit logs, and layer files that show the arc of human decision-making. When registering, identify the human-authored portions and disclaim the machine-generated segments. This improves the odds of registration and clarifies the scope of rights, which in turn helps downstream users understand what they can license with confidence.

Implications for the AI Economy and Copyright

The denial does not resolve separate fights over training data and fair use, where newsrooms, artists, and stock agencies are suing AI developers. Those cases—such as artists’ claims against Stability AI and litigation by publishers against model makers—turn on different parts of the statute. Still, today’s outcome signals that, absent new legislation, courts and agencies will hew closely to the text that ties authorship to human creativity.

Expect increased investment in provenance and disclosure tools. The Coalition for Content Provenance and Authenticity, whose members include major media and tech firms, is pushing standards that embed origin metadata into files. Stock platforms have adopted labeling and vetting for AI-assisted submissions. These measures won’t convert machine outputs into copyrighted works, but they help buyers assess risk and creators signal where human authorship begins.

What to Watch Next in AI and Copyright Law

Regulators are still fine-tuning guidance, and Congress is studying whether the law needs updates to reflect widespread generative tools. Future cases will likely probe the gray zones—how much editing is enough, how to treat collaborative works mixing human and machine, and how disclosure failures affect enforcement.

For now, the takeaway is straightforward: creators who rely on fully automated outputs should not expect exclusive rights, while those who can demonstrate significant human choices and craftsmanship retain a path to protection. The Supreme Court’s decision keeps that line clear—and keeps the pressure on policymakers to decide whether it should move.