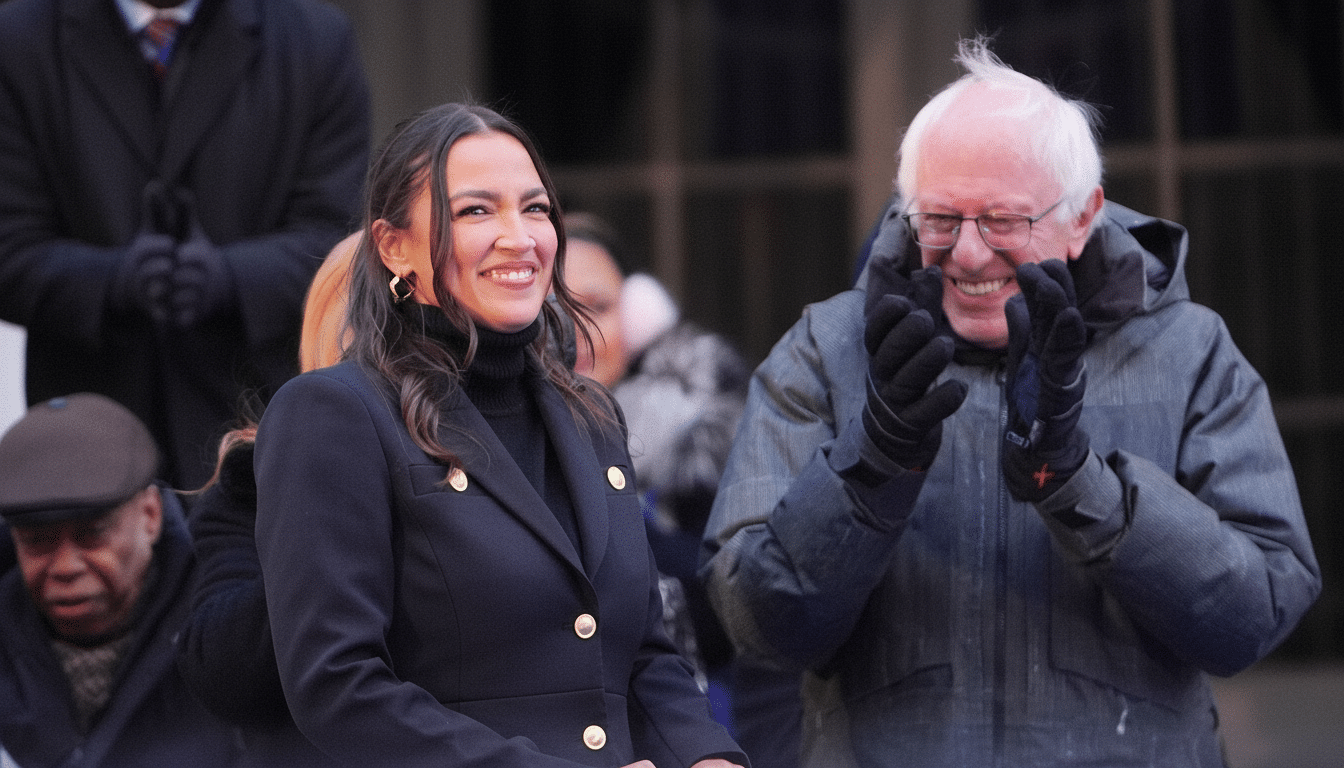

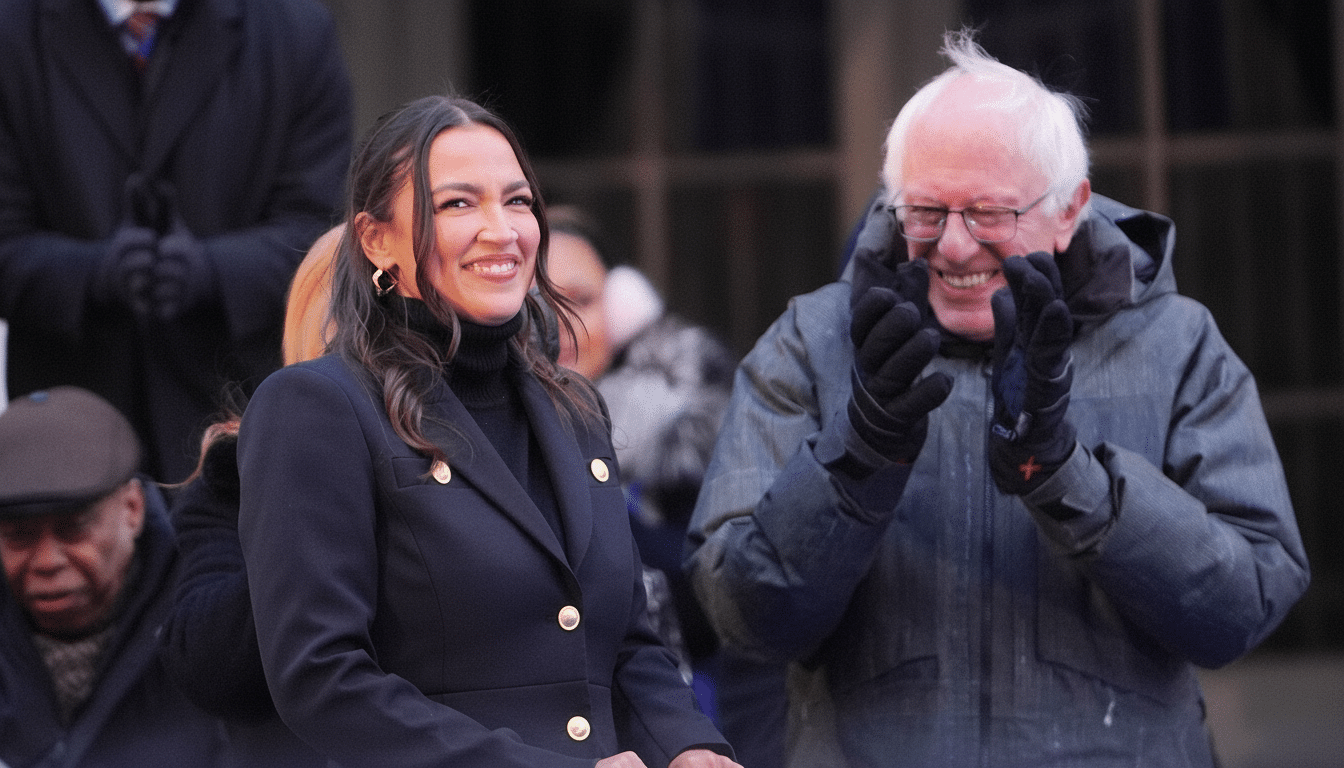

Senator Bernie Sanders and Representative Alexandria Ocasio-Cortez unveiled companion bills that would halt construction of new data centers with peak power loads above 20 megawatts until Congress enacts comprehensive rules for artificial intelligence. The move squarely targets the hyperscale infrastructure driving today’s AI boom and, if adopted, would put a large share of planned facilities on ice.

The 20 MW threshold would spare smaller edge sites but capture most AI training clusters and many cloud availability zones, where single campuses commonly exceed 50–100 MW. Backers frame the pause as a safety valve while federal agencies set guardrails for increasingly powerful models that require enormous compute and energy.

What The Proposed Bills Would Do And How They Work

The proposal imposes a temporary moratorium on new data centers larger than 20 MW of peak load, tied to the enactment of a broader AI governance package. In addition to the pause, the sponsors call for pre-release federal review and certification of advanced AI models, protections against AI-driven job displacement, limits on environmental impacts from digital infrastructure, and requirements that construction use union labor. They also back curbs on exporting advanced chips to countries without comparable AI safeguards.

Sanders and Ocasio-Cortez cite repeated warnings from industry leaders who have urged tighter oversight. Among the voices they point to are Elon Musk, who has said AI is “far more dangerous than nukes,” Google DeepMind’s Demis Hassabis, Anthropic’s Dario Amodei, OpenAI’s Sam Altman, and Nobel laureate Geoffrey Hinton. Public sentiment is more cautious than bullish: a recent Pew Research Center survey found most Americans are more concerned than excited about AI, and only 10% said their excitement outweighs their concern.

Why Lawmakers Are Targeting Large Data Centers Now

AI’s rapid escalation has turned data centers from back-office utilities into front-page infrastructure. Communities from Northern Virginia’s “Data Center Alley” to fast-growing corridors in Ohio, Texas, and Georgia are wrestling with land use, round-the-clock noise, transmission buildouts, and competition for limited grid capacity. Utilities are rewriting load forecasts on the fly, with Dominion Energy in Virginia projecting data center demand to multiply over the next decade, and grid operators in regions like PJM and ERCOT warning that large new loads are stressing interconnection queues.

The North American Electric Reliability Corporation has flagged rapid demand growth from large loads, including data centers, as a reliability challenge for planners. Local officials, meanwhile, face a balancing act: the tax base and jobs created by server farms versus the infrastructure costs, water use, and land commitments they lock in for decades.

Power And Water Strain By The Numbers For AI Growth

The International Energy Agency estimates global data center electricity demand could roughly double between 2022 and 2026, surpassing 1,000 terawatt-hours and approaching the annual consumption of a country like Japan. AI accelerators intensify that curve by packing more compute into each rack, pushing facilities toward higher power densities and specialized cooling.

Water is part of the footprint, too. Microsoft’s latest sustainability report disclosed a year-over-year jump of roughly 34% in water consumption, and Google reported an increase of about 20%, trends both companies have linked in part to AI expansion. Academic researchers from the University of California, Riverside and the University of Texas at Arlington have estimated that training a single state-of-the-art model can drive water use in the hundreds of thousands of liters when accounting for data center cooling and upstream electricity generation.

By drawing the line at 20 MW, the bills zero in on the most resource-intensive projects. Many hyperscale and AI-focused builds cluster well above that mark, meaning a federal pause would immediately ripple through development pipelines in states courting cloud investments with tax incentives.

Political And Market Headwinds Shaping The Debate

Expect pushback from both industry and national security hawks who argue a moratorium would blunt America’s edge in AI against rivals such as China. Business groups will emphasize construction and operations jobs, along with long-term tax revenue for local governments. The sponsors’ call to restrict exports of advanced chips to countries lacking similar AI rules intersects with existing Commerce Department controls, adding a geopolitically charged layer to the debate.

Tech firms are also formidable political actors. According to OpenSecrets, major platforms and chipmakers collectively spend tens of millions of dollars per year on federal lobbying. Any broad construction ban could invite legal fights over federal preemption, environmental review authorities, and state incentive programs designed to attract data center investment.

What To Watch Next As Congress Weighs AI Data Centers

Even supporters describe the bills as an opening bid that could evolve. Lawmakers may explore narrower options, such as licensing “frontier” AI systems before release, conditioning federal tax credits on efficiency and water standards, or limiting large builds in water-stressed regions rather than a blanket nationwide halt. Agencies like the Department of Energy, the Environmental Protection Agency, and the Federal Energy Regulatory Commission would likely play central roles in any negotiated framework.

For developers and communities, the immediate implication is uncertainty. Some builders may race to subdivide campuses into sub-20 MW phases, but power-delivery constraints and interconnection backlogs are not so easily segmented. The central question lawmakers must resolve is whether the AI era’s infrastructure should keep sprinting ahead of policy—or wait for rules that match its scale.