Nvidia delivered another blowout quarter, setting fresh highs for revenue as global AI infrastructure spending surges to unprecedented levels. Demand for accelerated computing continued to outrun supply, powering a sharp jump in data center sales and reinforcing the view that record capex outlays by cloud and AI providers are translating directly into Nvidia’s top line.

Data Center Dominance And A Deeper Breakdown

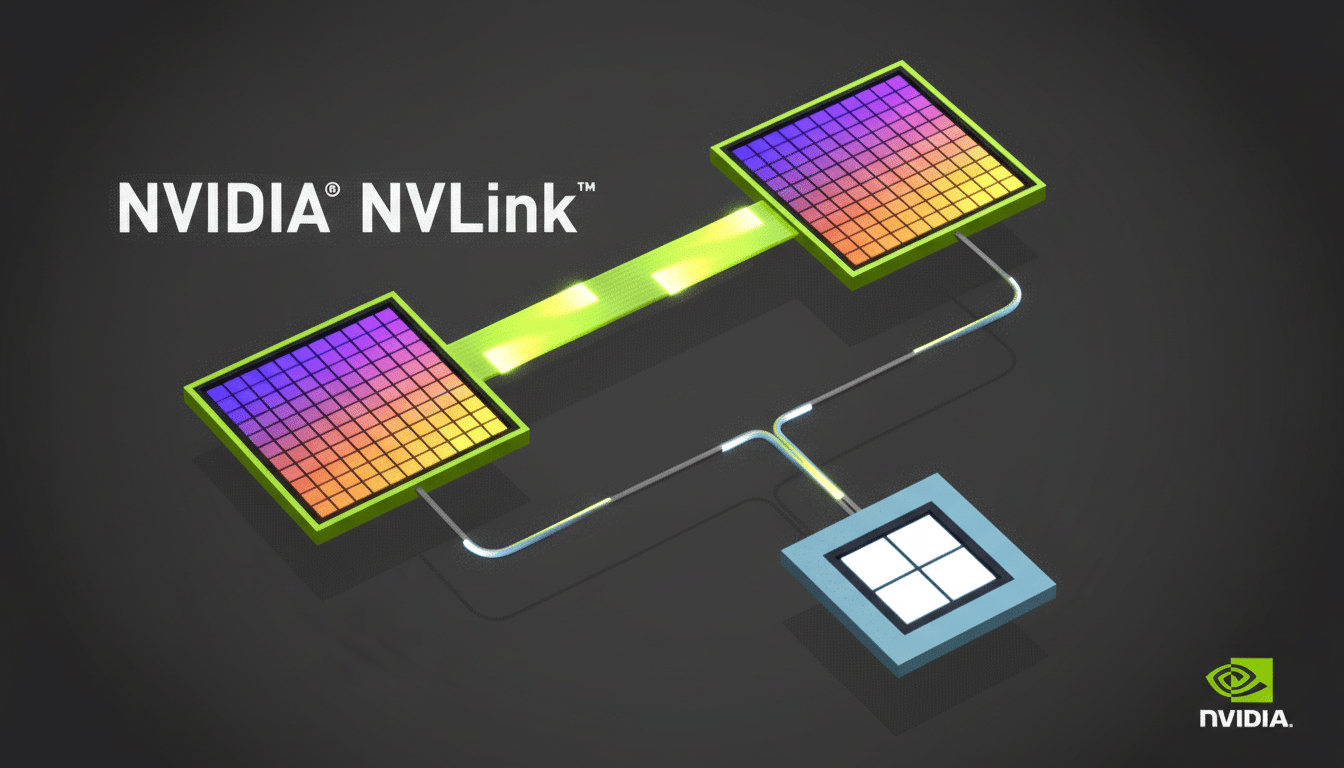

The company reported $68 billion in quarterly revenue, up 73% year over year, with $62 billion coming from its data center segment. Nvidia also broke out the engine of that growth: $51 billion from compute, largely GPUs, and $11 billion from networking, including NVLink systems that tie thousands of accelerators together into AI supercomputers.

Full-year revenue reached $215 billion, underscoring how AI training and inference have become a structural, not cyclical, demand driver. On the earnings call, executives noted even older, six‑year‑old GPUs in the cloud are being fully utilized, a telling sign that capacity remains scarce and pricing power intact across product generations.

Record Capex Becomes The Flywheel For AI Growth

Across the industry, capital spending for AI infrastructure has entered “build-at-all-costs” mode. Hyperscalers including Microsoft, Amazon, and Google have all signaled elevated data center capex to expand AI capacity, while enterprise buyers accelerate pilot-to-production rollouts. Industry trackers like Dell’Oro Group and Synergy Research have characterized the current wave as the fastest buildout of high-performance compute ever recorded.

Nvidia’s thesis is straightforward: more compute quickly becomes more revenue for customers. Management argued that AI services are now monetizing at scale—measured in tokens generated, queries answered, and models deployed—supporting continued returns on massive infrastructure budgets. Networking growth alongside GPUs also highlights a broader platform sale: higher attach rates for NVLink switches, cabling, and software stack subscriptions deepen Nvidia’s share of wallet per data center rack.

China Remains A Wild Card For Nvidia’s Outlook

Despite a partial easing of export restrictions, Nvidia reported no revenue from chip exports to China in the quarter. The company said limited quantities of H200 products had received U.S. approvals for China-based customers, but emphasized there is no clarity on whether shipments will ultimately be allowed to land or recognize revenue.

Longer term, competitive dynamics in China are evolving. Management pointed to local GPU vendors gaining momentum after recent IPOs, a nod to players like Moore Threads and others pursuing domestic accelerators. The implication is twofold: export policy remains a near-term swing factor, while homegrown alternatives could reshape share in the region over time.

Strategic Bets With OpenAI And The AI Ecosystem

Nvidia said it is working toward a partnership agreement with OpenAI tied to a potential $30 billion investment, while cautioning in filings with the U.S. Securities and Exchange Commission that there is no assurance a deal will occur. The company also highlighted ongoing collaborations with Anthropic, Meta, and xAI—relationships that reinforce Nvidia’s role at the center of the model training and deployment stack.

For Nvidia, deep ecosystem ties are as strategic as silicon leadership. Preferred access to flagship model training projects and co-design of large clusters can steer hardware specifications, software frameworks, and long-term road maps, creating switching costs that go beyond any single GPU generation.

Supply Chain Stretch And Competitive Pressure

Meeting demand still hinges on the supply chain’s most constrained links, notably advanced packaging and high-bandwidth memory. TSMC’s capacity expansions in CoWoS and supplier ramp-ups in HBM remain pivotal to how quickly new AI clusters can come online. Nvidia’s rising networking revenue suggests it is not just selling chips but orchestrating full-stack systems at cluster scale.

Competition is intensifying. AMD’s latest accelerators, custom silicon from hyperscalers, and specialized AI processors from startups are all vying for share in training and inference. Yet Nvidia’s CUDA software moat, model optimization toolchains, and mature developer ecosystem continue to set a high bar that rivals must clear to win meaningful workloads.

What To Watch Next As The AI Infrastructure Expands

Key markers for the next leg of the cycle include the pace of new cluster rollouts, networking attach rates, and evidence of sustained ROI from AI services across industries. Watch for progress on China export visibility, any definitive outcome on the OpenAI partnership, and signals from hyperscalers on capex plans as current capacity begins to monetize at higher utilization.

If the capex flywheel keeps turning—and early data suggests it will—Nvidia’s data center machine has further room to run. Another record quarter amid record spending is not just a headline; it’s the operating model for the AI era.