Attackers are moving faster with artificial intelligence, and the gap between offense and defense is widening. Generative models now automate reconnaissance, write polymorphic malware, and spin up convincing deepfakes at industrial scale. If you’re waiting for tooling to catch up, you’re already behind. The only sustainable response is to match your adversaries’ speed, persistence, and experimentation.

Recent threat intelligence from Google’s Threat Intelligence Group points to a shift from mere productivity boosts to AI-enabled malware that changes behavior mid-execution. Anthropic has documented professional “influence-as-a-service” operations and novice actors leveling up with LLM-assisted malware development. Meanwhile, hyperreal video and voice synthesis is collapsing trust in what we see and hear; a widely reported case saw a finance worker approve a $25 million transfer after a deepfaked video call of senior executives.

- Track the evolving threat landscape with AI-first intel

- Eliminate phishable authentication with passkeys and MFA

- Inventory and govern AI agents as privileged identities

- Apply real Zero Trust across data, models, and networks

- Control OAuth and API delegations to reduce token risk

- Build deepfake resilience with strong verification steps

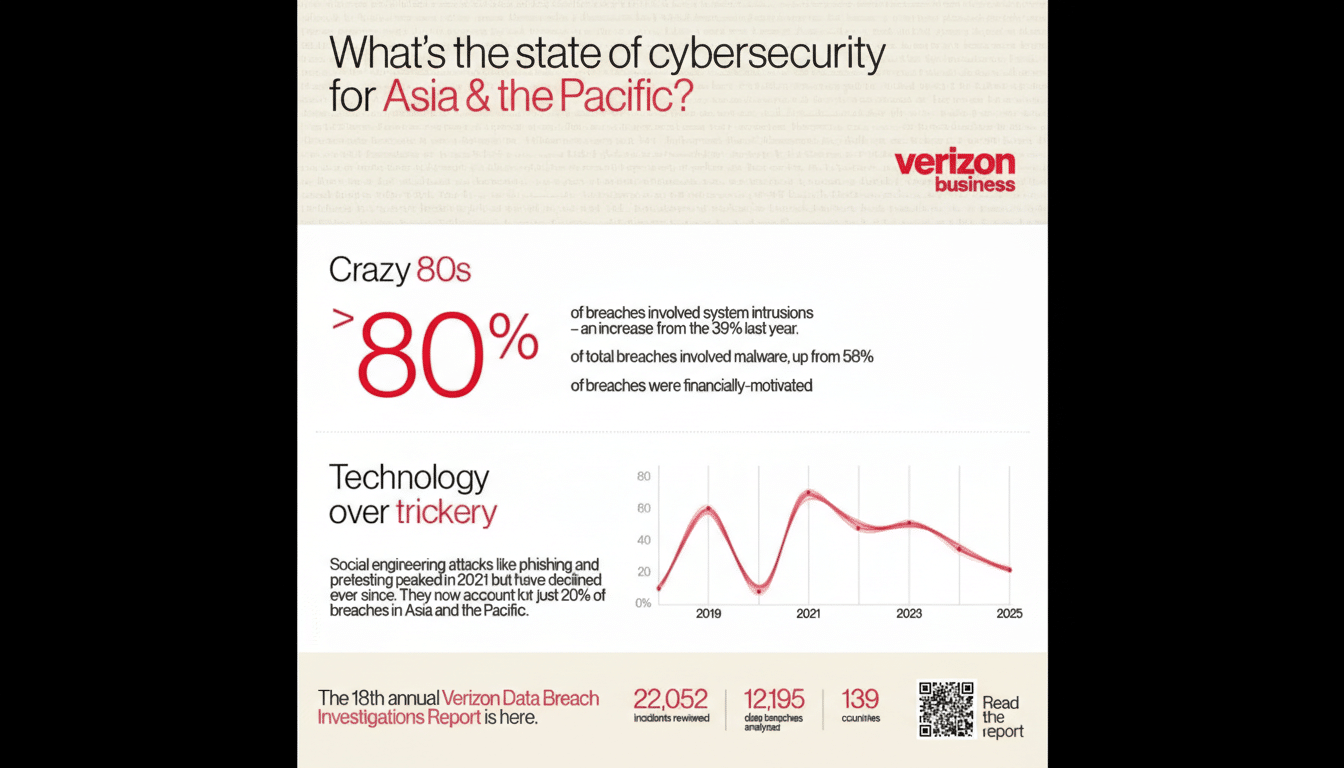

The stakes are concrete. Verizon’s Data Breach Investigations Report finds the “human element” in 68% of breaches, with social engineering and credential misuse still dominant entry points. IBM’s Cost of a Data Breach research pegs average breach losses near $4.9 million. AI doesn’t invent new motives—it accelerates old ones. Here are six force-multipliers to keep pace.

Track the evolving threat landscape with AI-first intel

Build a habit of threat literacy. Prioritize updates from frontline sources: Google’s Threat Intelligence Group, Anthropic’s safety team, OpenAI’s risk findings, CISA advisories, MITRE ATT&CK (including emerging AI-oriented techniques), the OWASP Top 10 for LLMs, and the AI Incident Database. Feed these into your intel pipeline and brief decision-makers monthly.

Translate learnings into action: update blocklists, tune detections for LLM-assisted phishing patterns, add model egress monitoring to catch sensitive prompts leaving your environment, and red-team your own generative systems. If you run models, log prompts, outputs, and tool calls for post-incident forensics.

Eliminate phishable authentication with passkeys and MFA

Attackers use AI to clone voices, spoof domains, and craft perfect prompts. Don’t give them an easy win. Move users and admins to phishing-resistant credentials: FIDO2 passkeys bound to a user’s device or hardware keys, plus number-matching or device-bound approvals for step-up checks. Kill SMS and email one-time codes wherever possible—those are now low-hanging fruit.

Back this with conditional access, impossible-travel bans, and mandatory reauth for sensitive actions. Phase out legacy protocols that bypass MFA. The fastest way to shrink your attack surface is to remove passwords from critical workflows.

Inventory and govern AI agents as privileged identities

Agentic AI is arriving in ticketing, finance, code repos, and customer ops. Treat every non-human identity like a high-value target. Register each agent in your identity and access management platform (e.g., Microsoft, Okta, Ping Identity). Issue unique credentials, scope least-privilege API access, and require per-action approvals for anything with financial, code, or data impact.

Segment runtime environments so a compromised agent can’t move laterally. Maintain an “agent bill of materials” listing its tools, data sources, model versions, and owners. Crucially, implement a kill switch: the ability to suspend an agent and revoke its keys in minutes, not days.

Apply real Zero Trust across data, models, and networks

Zero trust is not a slogan; it’s a discipline. Start with default-deny, then grant just-in-time, just-enough privileges based on verified identity, device health, and context. Microsegment networks; isolate training data, model endpoints, and vector databases; and monitor egress from model-serving infrastructure to prevent data leakage.

Pair data loss prevention with policy engines that redact secrets before prompts ever leave the client. Adopt continuous verification for privileged sessions and rotate short-lived credentials everywhere. Friction that blocks one AI-accelerated intrusion is worth the extra click.

Control OAuth and API delegations to reduce token risk

OAuth tokens are catnip for adversaries because they bypass passwords and MFA. Inventory every app-to-app consent across your SaaS estate and revoke stale or overprivileged grants. Prefer short-lived, audience-restricted tokens with narrow scopes; lock down refresh tokens and alert on unusual token minting or cross-tenant API calls.

Expect explosive growth in delegated access as agents automate tasks across calendars, CRMs, storage, and code hosts. Put a review board around new integrations, require owner attestations, and enforce automated expiry. If you can’t find and revoke a token in minutes, you don’t control it.

Build deepfake resilience with strong verification steps

Assume voices and faces can be forged. Establish out-of-band verification for sensitive requests: known-number callbacks, shared passphrases, or dual approvals in a separate system. Train staff to spot latency, lip-sync slips, and context errors—and to slow down when stakes are high.

Adopt content provenance where available via C2PA-backed signals, but don’t rely on watermarks alone—they can be stripped. Update SOC runbooks for deepfake-enabled fraud, and exercise scenarios that simulate cloned executives joining video calls or sending urgent payment orders.

The pattern is clear: adversaries are using AI to compress the time from idea to intrusion. Your counter is persistence and preparation—continuously learning, removing easy paths in, controlling machine identities, and rehearsing failures. You won’t stop every attempt. But if you make AI work harder to hurt you, most attackers will move on to an easier target.