Application modernization sounds strategic. In reality, it’s messy.

You’re refactoring legacy code. Migrating from monolith to microservices. Moving workloads to the cloud. Replacing UI frameworks. Introducing APIs where none existed before. And doing all of this while the business expects zero downtime and faster releases.

- 1. Handling Constant UI and Workflow Changes (Self-Healing in Action)

- 2. Accelerating Legacy-to-Cloud Migrations

- 3. Intelligent Test Generation for Refactored Code

- 4. Supporting API-First and Microservices Architectures

- 5. Risk-Based Test Optimization

- 6. Reducing Technical Debt in Automation

- 7. Scaling Across Channels and Environments

- 8. Continuous Learning from Production Data

- What This Really Means for Modernization Teams?

Here’s the thing: modernization fails less because of technology, and more because quality doesn’t scale with change.

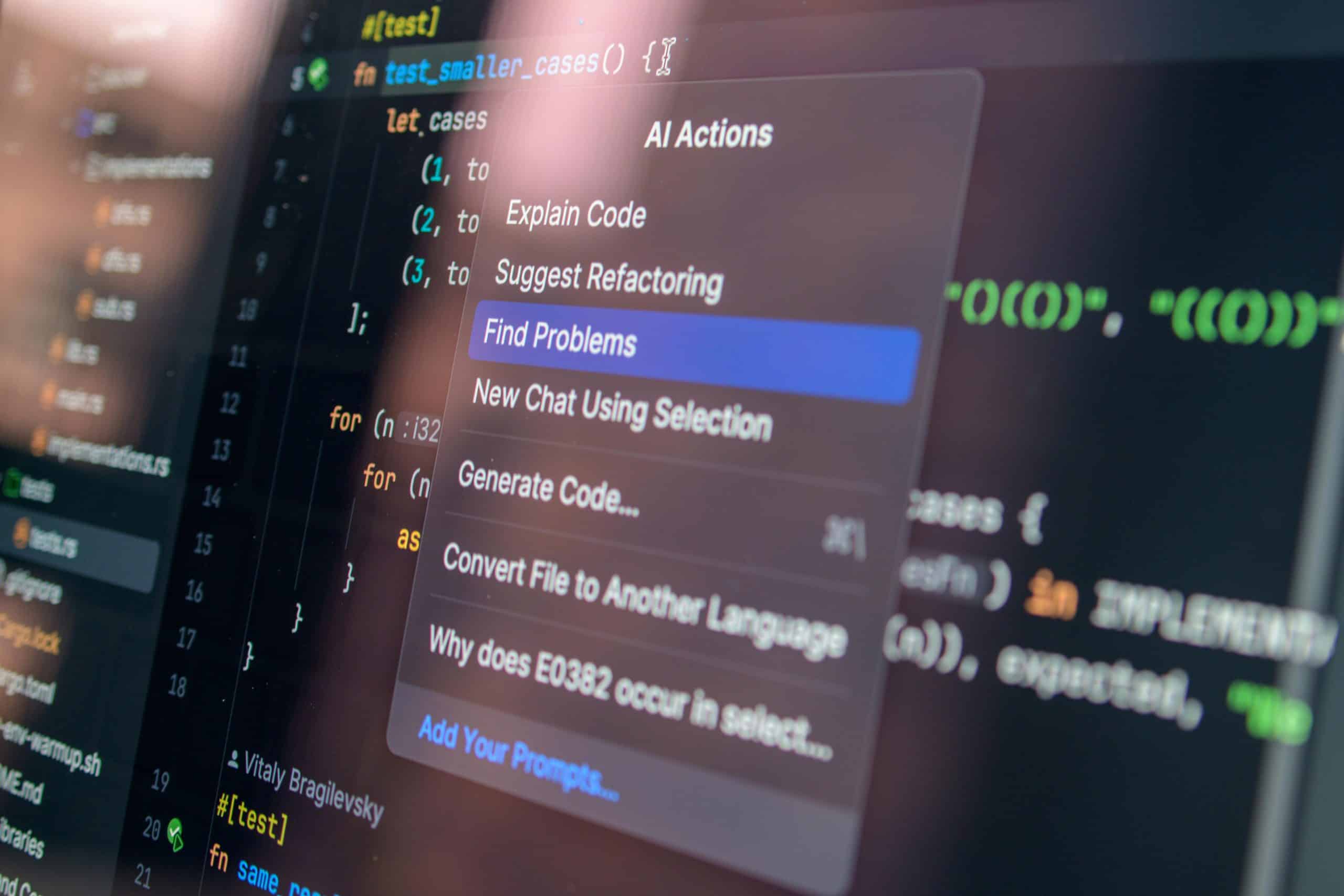

That’s where AI-based testing tools step in.

Let’s break down how they actually help.

1. Handling Constant UI and Workflow Changes (Self-Healing in Action)

Modernization almost always changes the UI layer first. New design systems. New component libraries. React replacing legacy JSP. Angular replacing old web forms.

Traditional automation scripts break with every DOM change.

What this really means is:

- Fewer broken tests after UI refactors

- Reduced maintenance effort

- Faster regression stabilization

Instead of rewriting scripts sprint after sprint, your automation adapts.

Self-healing is not magic. It’s pattern recognition applied to test stability.

2. Accelerating Legacy-to-Cloud Migrations

When applications move to the cloud, everything shifts:

- Infrastructure

- Authentication layers

- API endpoints

- Performance characteristics

AI-powered testing platforms help by:

- Automatically mapping API changes and parameter variations

- Generating regression scenarios across environments

- Validating performance anomalies using intelligent baselines

Rather than manually rewriting coverage, teams can use AI-assisted test generation to quickly rebuild regression packs aligned to new cloud-native architectures.

Modernization speed increases because test coverage keeps pace.

3. Intelligent Test Generation for Refactored Code

Modernization often involves:

- Breaking monoliths into microservices

- Rewriting business logic

- Introducing event-driven systems

AI-based tools can analyze application behavior, user journeys, and API contracts to suggest new test scenarios automatically.

This reduces:

- Coverage gaps during refactoring

- Human bias in selecting test cases

- Over-reliance on outdated regression suites

Instead of testing what used to exist, you test what now exists.

That shift is critical.

4. Supporting API-First and Microservices Architectures

Modern applications are API-heavy. Services communicate constantly, which is why many organizations study approaches used to scale API testing in distributed systems.. Services communicate constantly. Failures are often hidden beneath the UI.

AI-based testing tools bring scalability by:

- Auto-parameterizing API inputs for broader coverage

- Testing edge cases and status code variations

- Detecting contract drift across service versions

- Identifying unusual response patterns

When you modernize into microservices, test complexity increases exponentially. AI reduces the manual effort needed to scale validation.

It moves testing from “does this work?” to “is this resilient under variation?”

5. Risk-Based Test Optimization

Modernization projects are resource-intensive. You can’t test everything manually. You shouldn’t even try.

AI-driven risk analysis helps prioritize:

- High-impact business flows

- Frequently changed modules

- Historically defect-prone areas

Instead of running bloated regression cycles, teams focus automation where it matters most.

That improves:

- Release confidence

- Cycle time

- Test execution efficiency

Scalability isn’t just about more tests. It’s about smarter tests.

6. Reducing Technical Debt in Automation

Legacy test automation frameworks become as brittle as the systems they test.

AI-based platforms help by:

- Abstracting test logic from low-level scripts

- Promoting modular test assets

- Encouraging reuse over duplication

- Simplifying maintenance through self-adjusting models

This reduces long-term automation debt and aligns testing architecture with modern software design principles.

Modern application. Modern test architecture.

They need to evolve together.

7. Scaling Across Channels and Environments

Modern systems are multi-channel:

- Web

- Mobile

- APIs

- Backend services

- Third-party integrations

ACCELQ’s AI-based unified testing platforms allow validation across these layers within the same framework.

That means:

- End-to-end business flow validation

- Environment comparison (dev, QA, staging, prod-like)

- Parallel execution for faster feedback

Modernization without scalable test execution creates bottlenecks. AI removes those bottlenecks.

8. Continuous Learning from Production Data

Some AI-driven tools analyze defect trends, failure patterns, and user behavior data.

They learn:

- Which flows fail most often

- Where edge cases are emerging

- How real users interact differently than expected

This feedback loop helps modernization efforts stay aligned with actual usage rather than assumptions.

Testing becomes adaptive, not reactive.

What This Really Means for Modernization Teams?

Application modernization is about reducing technical debt and improving agility.

ACCELQ’s Autopilot testing support that by:

- Making automation resilient to change

- Scaling coverage across complex architectures

- Reducing maintenance effort

- Improving regression efficiency

- Aligning testing with evolving systems

They don’t replace testers. They amplify them.

The goal isn’t autonomous perfection. It’s intelligent acceleration.

Modernization increases change velocity. AI-based testing ensures quality keeps pace with that velocity.

And that’s the difference between a transformation that stalls and one that scales.