For both training and inference, ChatGPT requires an exhaustively large amount of water to cool itself. Training GPT-3 took an estimated 700,000 liters of fresh water and each 20-50 user prompts consume around 500ml indirectly in result, most of which comes from data center cooling systems.

Why AI’s Water Use Matters

AI changed how we search, work, and create content. But behind the convenience is a largely hidden environmental cost: water use.

- Why AI’s Water Use Matters

- How Much Water Does ChatGPT Actually Use?

- Training Phase

- Inference (User Interactions)

- Why A.I. Needs So Much Water

- Comparing ChatGPT’s Water Use to Other Activities

- 2025 Updates

- The Sustainability Debate

- FAQs: How Much Water Does ChatGPT Use?

- Why does ChatGPT require water?

- How many water per question?

- Does GPT-4 consume more water than GPT-3?

- Which companies are behind ChatGPT?

- Can Artificial Intelligence become water-efficient?

- Is ChatGPT less efficient than Google Search for water use?

Each prompt to ChatGPT is served by enormous data centers. These candescences generate heat and many use evaporative cooling fed off local sources of fresh water.

Researchers revealed in 2023 how AI water consumption has emerged as a significant sustainability concern, particularly as global water shortages continue to intensify.

How Much Water Does ChatGPT Actually Use?

Training Phase

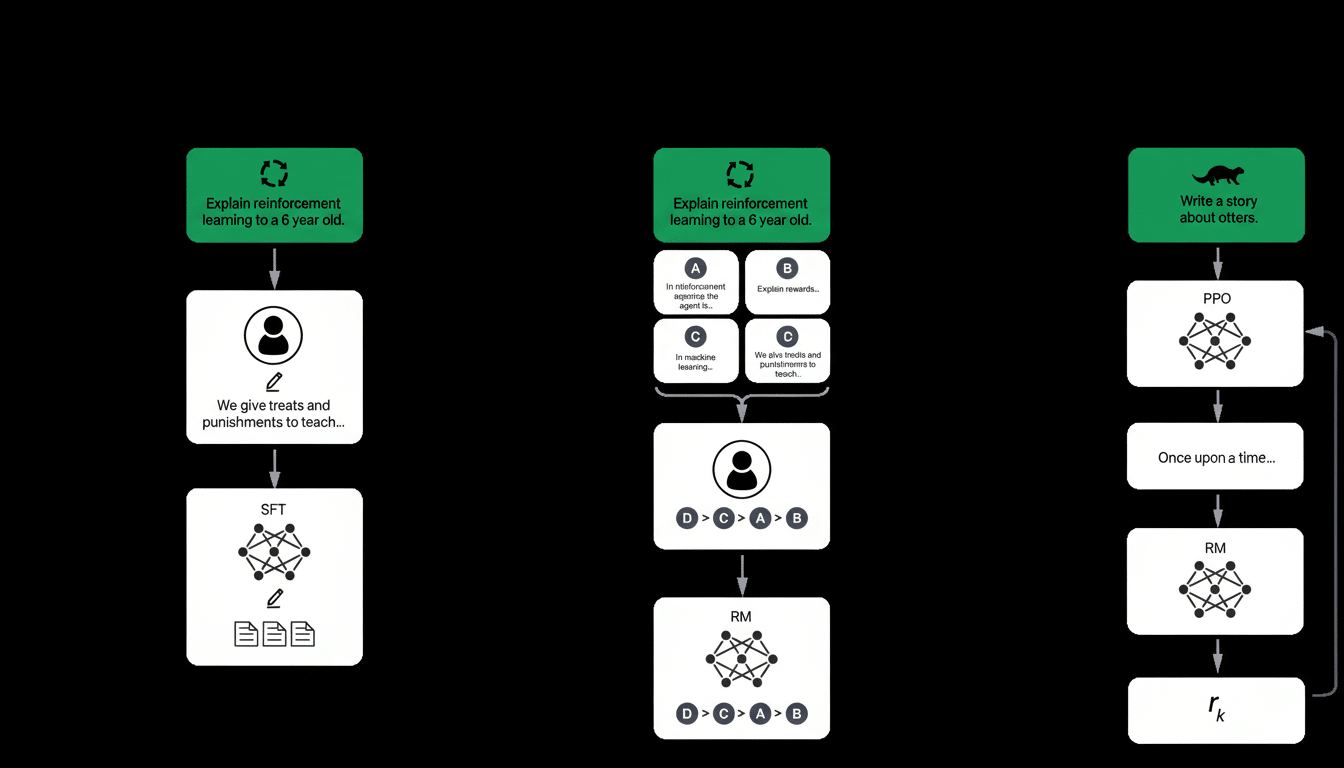

Training large AI models such as GPT-3 or GPT-4 takes thousands of GPUs executing for weeks. Scientists at the UC Riverside and University of Texas estimated it took ~700,000 liters of clean freshwater to train GPT-3 — or roughly the daily consumption of 370 U.S. households.

Inference (User Interactions)

Water is still being used after training. A 2023 study calculated that every 20–50 ChatGPT requests use ∼ 500 ml water indirectly. And, at scale, millions of queries every day generate a major AI water footprint.

Why A.I. Needs So Much Water

- Data Center Cooling: Microsoft Azure and Google Cloud data centers employ water-based evaporative cooling to alleviate the heat.

- GPU Power Consumption: High power consumption GPUs generate heat, and need cooling time.

- Regional Impact: A query in Iowa or Arizona has a higher water footprint than Iceland, where cooling is naturally efficient.

Comparing ChatGPT’s Water Use to Other Activities

- 1 ChatGPT session (20–50 prompts): ~500 ml water bottle

- Training GPT-3: ~700,000 gallons (exactly 370 BMW cars)

- 1 hour Netflix HD streaming: ~0.25–0.5 liters

- Smartphone charging over 1 year: ~ 1,000 liters (indirect by electricit

2025 Updates

- Microsoft → aiming for a 96% reduction in data center water consumption by 2030 (via immersion cooling).

- Google DeepMind → experimenting with AI models that are water efficient.

- OpenAI → now required to reveal official water consumption reports.

The Sustainability Debate

Environmental organizations insist that AI has to have transparency in water reporting, similar to carbon emissions.

Proposed solutions include:

- Immersion, seawater cooling it will use for alternate cooling solutions.

- Siting AI data centers in chillier latitudes.

- AI speed up improvements for less GPU workload.

FAQs: How Much Water Does ChatGPT Use?

Why does ChatGPT require water?

Water cools data centers and this cooling is required to mitigate overheating during training and inference.

How many water per question?

~500 ml / 20–50 outputs, depending on the location and the cooling system.

Does GPT-4 consume more water than GPT-3?

Yes. Larger model, larger proportional scale = higher training water footprint (exact numbers undisclosed).

Which companies are behind ChatGPT?

Now they are here to answer, with GPT-3 24/7, and to tell us a tale.

The infrastructure layer is provided by Microsoft Azure, and its global data centers.

Can Artificial Intelligence become water-efficient?

Yes. Immersion cooling and renewable-energy data centers would reduce water consumption.

Is ChatGPT less efficient than Google Search for water use?

Yes, per query. Search consumes less energy and water than giant AI models.