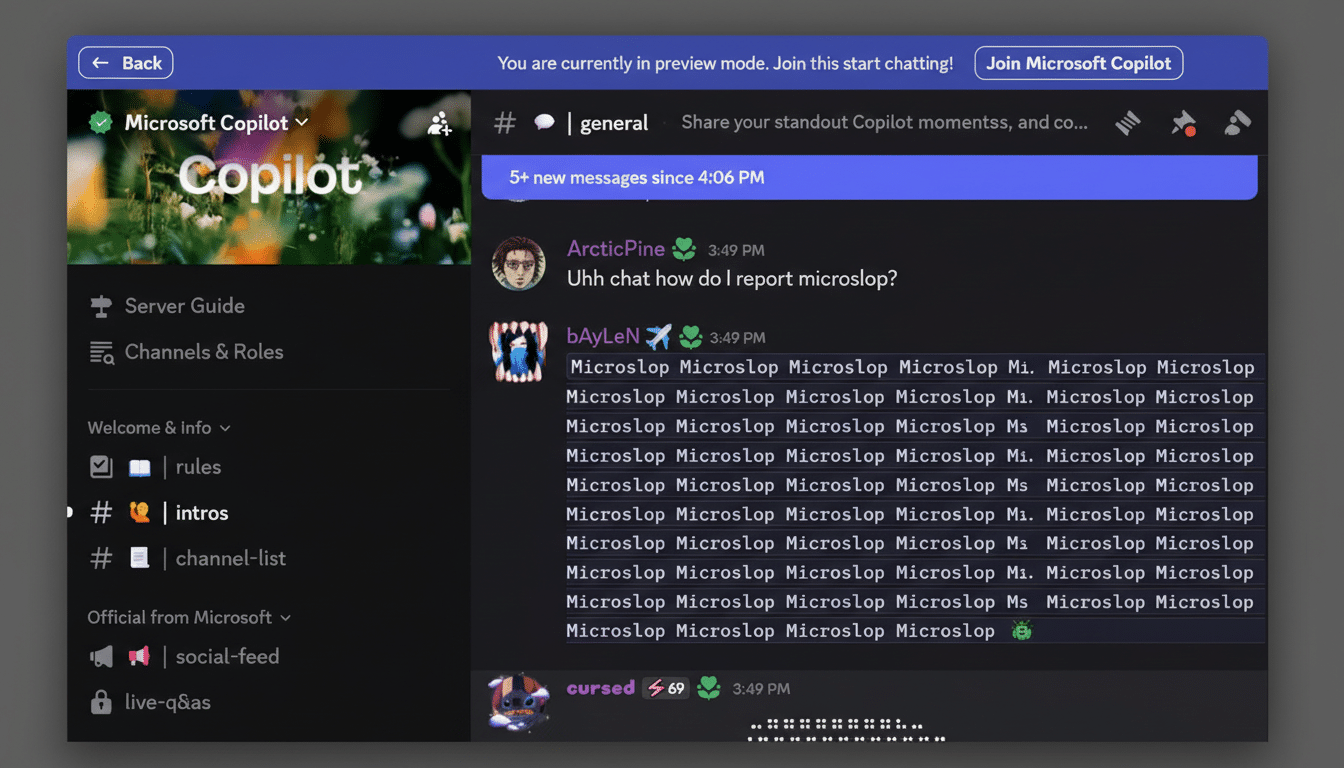

Microsoft’s attempt to scrub the word “Microslop” from its official Copilot Discord server did the opposite of what moderators intended. After users noticed messages with the term were silently blocked as “inappropriate,” the community quickly routed around the filter with leetspeak like “m1cr0sl0p,” prompting admins to relax the rule—at which point the floodgates opened.

The episode, flagged by community watchers and reported by Windows Latest, underscores a familiar lesson in online culture management: trying to ban a catchy insult can supercharge its spread, especially in fast-moving chat environments with a taste for in-jokes and performative pile-ons.

What Sparked the Copilot Discord Word Filter Decision

“Microslop” has become a shorthand jab at AI bloat and low-quality outputs, a meme that took off as Microsoft pushed Copilot across Windows, Edge, and Microsoft 365. The company’s leadership has tried to steer the conversation toward “sophistication” rather than “slop,” as the CEO argued in a December blog post, but sentiment on public forums remains mixed.

The decision to block the word on Copilot’s own Discord likely aimed to keep discussions constructive and to protect brand perception in an official venue. The problem: word filters treat a symptom, not the cause. Within hours, users transformed moderation into a game, discovering bypasses and publicizing them across channels.

How the Streisand Effect Amplified the Banned Term

Once the block lifted, references to “slop” multiplied, accompanied by the usual churn of off-topic memes, edgy image spam, and provocations that no marketing team wants near a flagship AI product. Members reported that the chaos spilled into multiple channels, including product-specific discussion areas and suggestion threads, as brigading accelerated.

It’s a classic Streisand Effect scenario: the attempt to suppress a term elevated it into the day’s headline for the server. Moderators were suddenly playing defense across multiple fronts, with users seizing on the moment to critique everything from Copilot’s quality to Microsoft’s online bedside manner.

Why Single-Word Bans Frequently Fail on Discord Servers

Discord’s moderation toolkit includes AutoMod for keyword blocking, regex matching, and attachment controls. Those tools work best against routine spam, but they’re brittle against motivated communities. Users can swap characters, insert accents, or embed text in images and screenshots. The result is a never-ending whack-a-mole that burns moderator time without changing behavior.

Discord’s transparency reports routinely cite massive enforcement actions against spam and raids, illustrating the scale of the challenge. The platform’s own safety guidance emphasizes layered defenses—rate limits, channel lockdowns, verified roles, and human review—over single-word filters. In practice, communities respond to signals, not just rules; visible censorship can become a rallying point.

History offers parallels. From the “Boaty McBoatface” naming saga to Reddit’s API protests, communities will invert attempts at top-down control into bottom-up commentary. Tech brands are especially vulnerable when the underlying grievance—a sense that AI is being pushed too fast or is underperforming—already has cultural momentum.

Where Brand Safety Concerns Collide with Growing AI Skepticism

The “slop” rhetoric reflects a deeper critique: that generative AI can be spammy, derivative, or unsafe without careful guardrails. Surveys from organizations like Pew Research Center show that public enthusiasm for AI is tempered by broad concerns. On a first-party channel like Copilot’s Discord, those anxieties surface as sharp memes and testy feedback—and attempts to silence them risk validating the critique.

For Microsoft, the reputational risk is twofold. There’s the immediate optics of losing control of the narrative on a branded server. And there’s the strategic cost of appearing reflexively censorious at the very moment the company is asking developers, educators, and enterprises to trust its AI stack.

What Microsoft Can Do Next to Stabilize Copilot’s Discord

Short term, a cooling-off period makes sense: slowmode, channel-specific lockdowns, or a temporary shutdown to reset rules and staffing. Focus filters on behavior (spam volume, repetitive posting, link farming), not a single hot-button word. Elevate verified feedback channels for bug reports and product ideas to give frustrated users somewhere productive to go.

Longer term, pair rigorous trust-and-safety coverage with visible engagement from Copilot leads. Host scheduled AMAs with clear scope and moderation, publish transparent content policies, and acknowledge contentious topics head-on. If “slop” is the meme, the counter isn’t a blacklist—it’s demonstrably better models, documented safeguards, and a cadence of improvements that shifts the conversation.

The takeaway is blunt but useful: culture moves faster than filters. Microsoft’s best defense against “Microslop” isn’t a ban; it’s building an AI experience that leaves the joke with nothing to stick to.