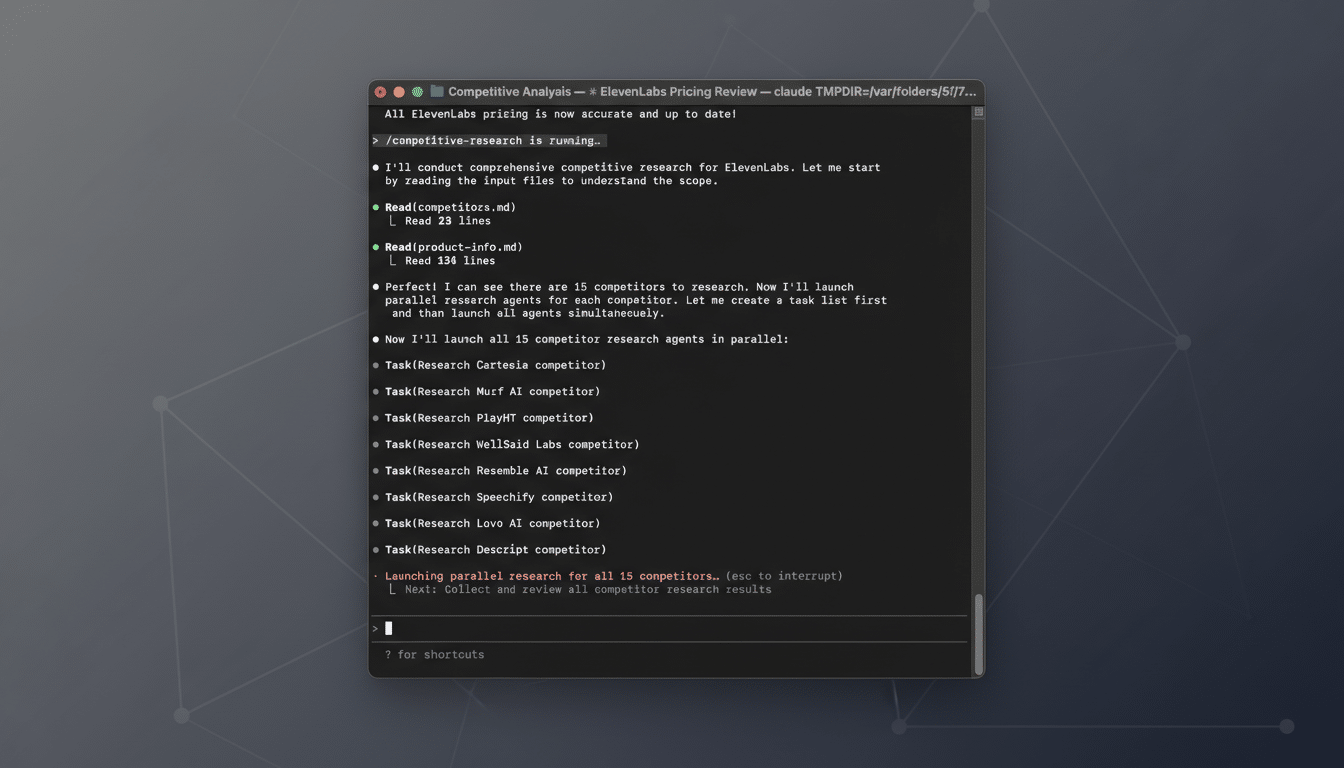

Anthropic has begun rolling out Voice Mode for Claude Code, bringing hands-free, conversational control to its AI coding assistant. The feature lets developers speak natural commands—then watch the assistant propose edits, explain code, or generate tests—aiming to cut friction and speed up common workflows.

An Anthropic engineer, Thariq Shihipar, noted on X that access is starting with about 5% of users and will expand in stages. Early testers will see an in-app prompt when they’re included. Once enabled, typing “/voice” toggles the mic, and spoken requests like “refactor the authentication middleware” or “explain this failing test” trigger immediate assistance.

Anthropic hasn’t detailed technical constraints yet, such as interaction caps, latency targets, or supported languages, and it’s not disclosing whether a third-party speech partner like ElevenLabs is involved. Those choices will shape performance, privacy guarantees, and enterprise readiness.

Why voice-driven coding matters for developer workflows

Developers constantly context-switch between code, docs, terminals, and chats. Voice can collapse some of that overhead. Saying “scan the repo for deprecated API calls and propose a patch” or “summarize what this service does and list its riskier paths” is faster than hunting through files and prompts. It also expands accessibility for engineers with repetitive strain injuries or those who prefer verbal ideation before committing code.

Evidence is mounting that AI pair programmers reduce time-to-completion and increase flow. GitHub has reported developers completing tasks 55% faster in controlled studies when assisted by AI. Layering real-time speech on top of code suggestions could amplify those gains by removing typing and navigation barriers, especially during exploratory refactors or multi-file changes where narration beats keystrokes.

Human–computer interaction researchers, including teams at Stanford and groups publishing at venues like ACM CHI, have explored speech-driven programming for years. Two consistent takeaways: voice shines for intent (“what” to change) and explanation (“why” a change matters), while precision editing (“where” and exact syntax) still benefits from a review step. Claude Code’s approach—speak request, receive a proposed diff or plan, then approve—fits that playbook.

How Claude Code’s new Voice Mode functions in practice

Voice Mode converts speech to text, feeds intent to the coding agent, and returns an action plan or patch. The “/voice” toggle keeps developers in control, allowing quick mic-on sessions for bursty tasks: “stub out integration tests for the payments flow,” “explain this stack trace like I’m new to the codebase,” or “generate a safe migration for users.email.” The assistant’s proposals can be reviewed before changes land, preserving code review discipline.

Success here depends on a few hard problems: recognizing domain-specific jargon (library names, function identifiers), handling accents in noisy environments, and managing interruptions when a developer wants to correct course mid-sentence. Real-time barge-in, low-latency transcription, and a custom vocabulary tuned to the project all contribute to whether Voice Mode feels fluid or frustrating.

Competitive landscape and implications for AI code tools

AI coding assistants are a crowded arena, with GitHub Copilot, Google, OpenAI, and Cursor vying for IDE real estate. GitHub’s “Hey, GitHub!” voice control signaled early interest in spoken workflows, while multimodal models from OpenAI and Google have pushed low-latency speech interactions forward. Anthropic’s move brings voice directly into a tool many developers already use for daily coding tasks.

Adoption momentum matters. Anthropic has said Claude Code’s run-rate revenue recently surpassed $2.5 billion and that usage has climbed sharply, with weekly actives roughly doubling in a short span. The broader Claude ecosystem has also seen surges in mobile rankings following high-profile policy decisions around defense use, suggesting a widening user base that could try voice soon after it appears.

Enterprises will scrutinize the details. They’ll want to know where audio is processed, whether transcripts are stored, what retention policies apply, and if any third-party vendors touch the data. Compliance teams will look for SOC 2 reporting, data residency options, and granular controls to disable or restrict voice in sensitive repos. For some shops, on-device speech or private cloud routes could be make-or-break.

What to watch next as Claude Code voice features expand

Three signals will reveal maturity: latency under conversational thresholds (sub-second transcripts and snappy patch proposals), interruption handling (can you correct or steer mid-utterance), and code-specific accuracy (does it understand your bespoke naming and architecture). Tight IDE integrations across VS Code and JetBrains, shortcut grammars for common tasks, and custom project vocabularies would further lift real-world utility.

Anthropic previously introduced voice interactions in its general-purpose assistant, and bringing that capability to Claude Code suggests a unifying multimodal stack. Starting with a controlled 5% rollout gives the team room to tune recognition, reduce false positives, and harden privacy defaults before opening the gates wider.

For developers, the promise is simple: talk through intent, let the assistant draft precise changes, and stay in flow. If Anthropic nails reliability and trust, Voice Mode could shift from a novelty to a daily habit—another small but meaningful step toward frictionless, conversational programming.