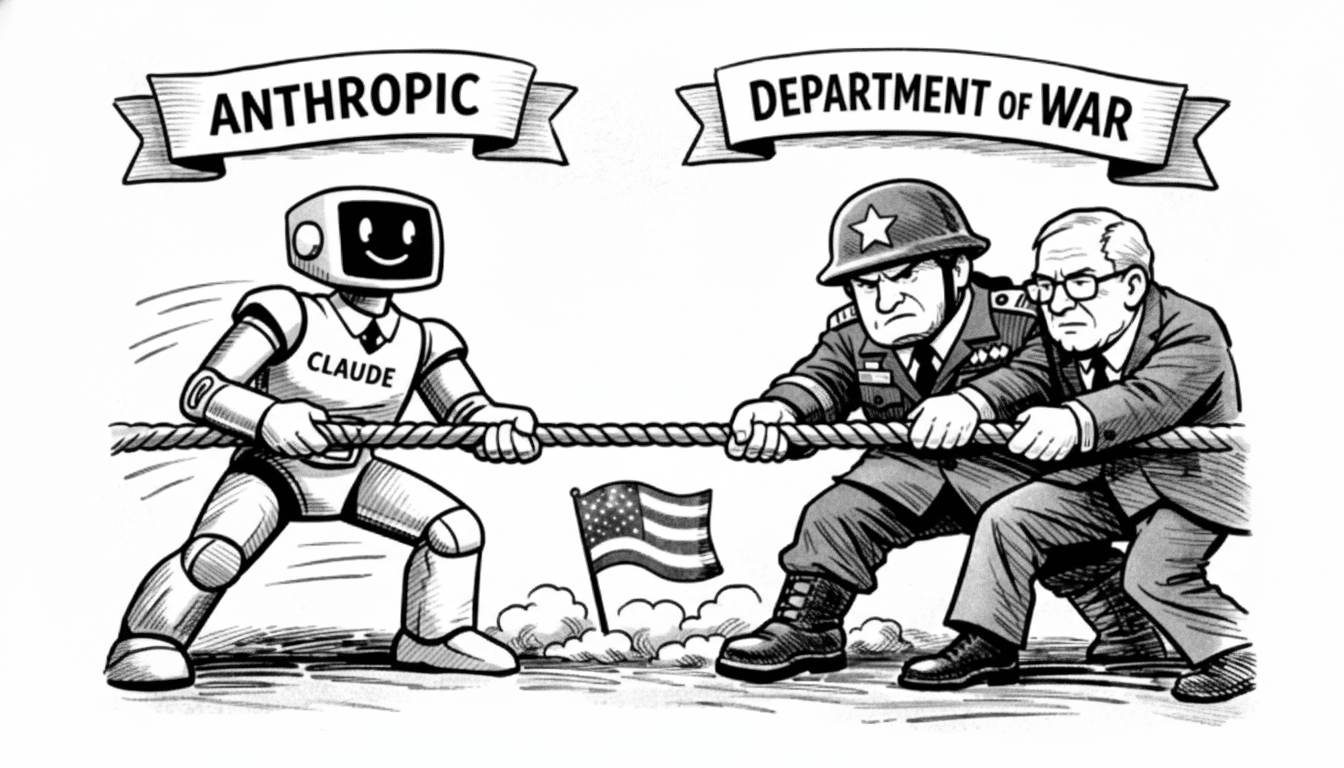

Anthropic is pushing back after the Department of War labeled the AI firm a supply-chain risk, a move that effectively chills federal use of its models. The company says the designation is legally flawed, vows to contest it in court, and argues that most commercial customers will see no disruption even as the government orders agencies to halt use of its tools.

Anthropic Pushes Back on Federal Security Label

Chief executive Dario Amodei characterized the designation as unsupported by law and process, signaling that Anthropic will seek judicial review. He also said the company remains committed to supporting national security users during any transition and noted that the vast majority of enterprise clients are unaffected by the federal action.

Amodei emphasized Anthropic’s track record building applications for military and intelligence users, including intelligence analysis, modeling and simulation, operational planning, and cyber operations. He acknowledged and apologized for the leak of an internal memo amid the dispute, and confirmed that the company has reopened talks with defense officials even as it readies a legal challenge.

To minimize disruption, Anthropic says it will continue to provide access and engineering support to the national security community at nominal cost while agencies migrate, subject to what the government permits. The company framed this as a good-faith step to keep critical missions running while disagreements are resolved.

Contract Standoff Over AI Use Restrictions

The confrontation traces back to a major federal award that Anthropic won, reportedly worth about $200 million. According to the company, it sought enforceable guardrails barring the use of its technology for widescale domestic surveillance and for fully autonomous weapons that can engage targets without a human in the loop. After the government declined to accept those terms, officials warned of a potential supply-chain risk designation and then issued it, alongside an executive order instructing agencies to stop using Anthropic’s AI.

“Human-in-the-loop” requirements are widely cited across defense ethics frameworks, but they often stop short of contractual prohibitions. That gap—policy principle versus procurement clause—is at the heart of this showdown. Policy analysts at the Center for Security and Emerging Technology have long noted that contractual specifics, not broad guidance, determine how AI is actually deployed in the field.

The stakes are significant. Federal information technology spending exceeds $100 billion annually, according to Office of Management and Budget reporting, and AI-enabled software is quickly becoming embedded across logistics, analysis, and cyber missions. A ban on a top-tier model provider can redirect substantial budgets overnight and reshape the vendor landscape.

What a Federal Supply-Chain Risk Tag Means for AI

Supply-chain risk actions can trigger immediate procurement freezes, require removal of designated products from federal networks, and cascade to subcontractors. The Federal Acquisition Security Council can recommend exclusion or removal orders across civilian agencies, while defense procurements flow through distinct DFARS clauses and risk assessments. Past precedents—such as government-wide removals of certain cybersecurity and telecom products—show how fast a single ruling can force agencies to rip and replace technology.

For AI specifically, a risk designation can touch everything from authority-to-operate approvals to model-hosting environments, data security, and export controls. Agencies may be pushed to pivot toward alternative models, open-weight systems hosted in government clouds, or integrators that can validate stronger provenance and usage controls under NIST’s AI Risk Management Framework and supply-chain guidelines.

Rival Deals and Industry Ripples Across AI Procurement

Complicating the picture, Amodei contrasted Anthropic’s standoff with a separate government arrangement involving OpenAI—an agreement he described as so opaque that even OpenAI referred to aspects of it as confusing. OpenAI chief Sam Altman publicly addressed user backlash over that deal, underscoring how quickly national security partnerships can spill into the court of public opinion.

For agencies, the near-term priority is continuity of operations. Many will evaluate substitute models, stand up redundancy across multiple providers, or shift more workloads to in-house stacks to reduce single-vendor exposure. For developers and contractors building on Anthropic’s models, the designation raises questions about ongoing ATOs, data handling, and whether mission-critical apps must be revalidated on new foundations.

The broader lesson extends beyond one vendor. This fight tests how far an AI company can go in embedding use constraints into binding contracts with a sovereign customer—and how the government will respond when a supplier asserts red lines around surveillance and autonomy. The outcome, whether through negotiation or the courts, will set a template for AI procurement, guardrails, and supply-chain governance across the national security community.